One Compression Ratio Doesn’t Fit All

Your brain runs three memory systems. Your AI agent runs one. That’s the bug.

Your brain runs three memory systems because a childhood birthday party and the periodic table serve completely different cognitive purposes. Your AI agent runs one. That’s the bug1.

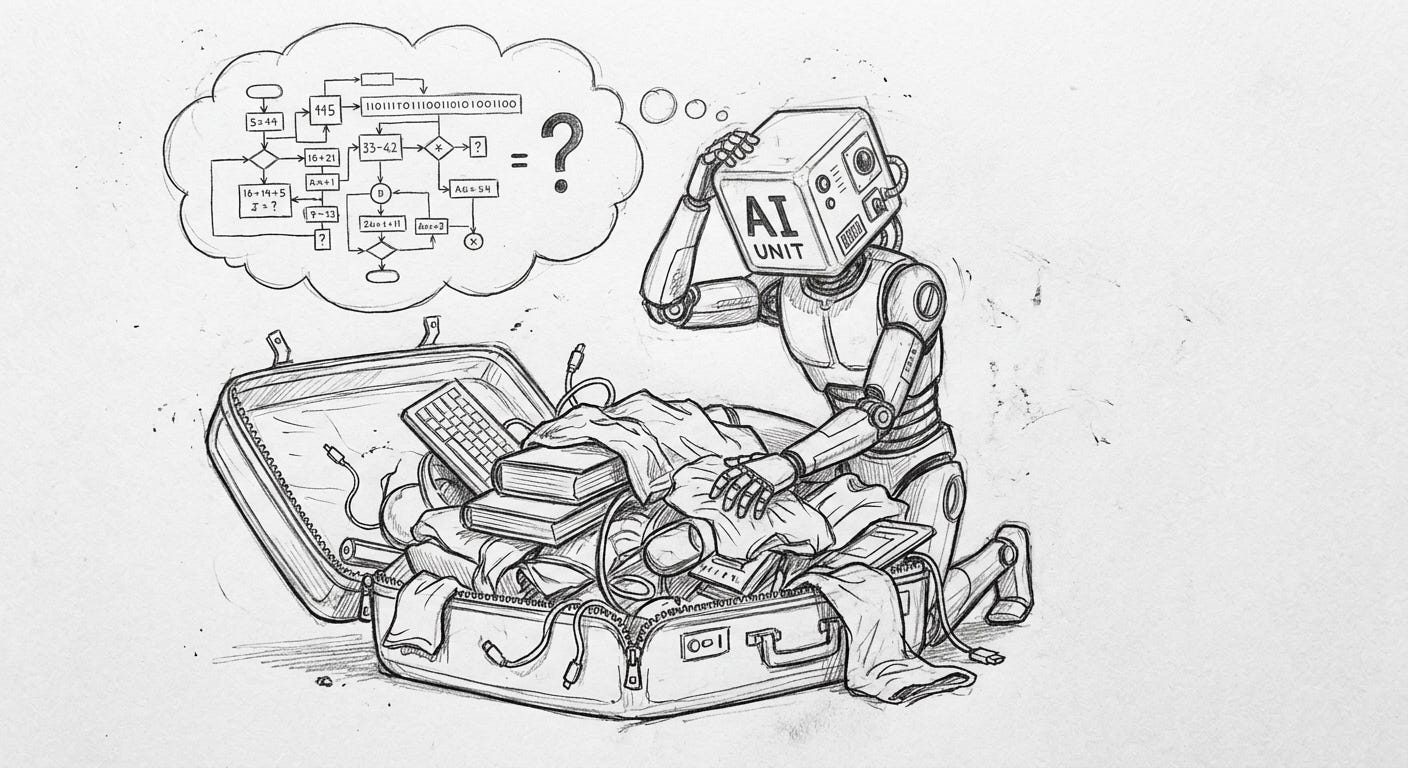

Let’s start with a scenario. You’re packing for a two-week trip. One suitcase. You can’t bring everything.

The obvious move is to start eliminating: formal wear you probably won’t need, that third pair of shoes, the book you optimistically think you’ll finish2. You compress your wardrobe down to what fits.

But here’s what you don’t do: you don’t compress everything the same way. You don’t fold your suits the same way you roll your t-shirts. You don’t pack your toiletries the same way you pack your laptop. Different items get different treatment based on fragility, frequency of use, and how badly things go if you get it wrong.

Packing is compression with awareness of what you’re compressing and why. Your agent is the person who puts everything in a trash bag and sits on it.

Now think about how AI agents compress context. A conversation gets too long, so the system summarizes it. Maybe it takes the last 50 turns and condenses them into a paragraph. Uniform compression. Everything treated the same.

The critical decision from turn 12? Compressed to one line. The casual banter from turn 30? Also compressed to one line. The error message the agent got from a tool call in turn 8, which will turn out to be important in turn 60? Gone. Summarized away because the compression didn’t know it mattered.

The agent packed the suitcase by just squeezing everything equally. The suit got wrinkled. The laptop got crushed. The toiletries leaked all over everything. And now your shampoo-soaked laptop is confidently hallucinating answers about a decision you made three days ago. Have a nice trip.

So what is your brain doing? The brain doesn’t recall a childhood memory with the same fidelity as what you had for breakfast3. Different retrieval modes for different purposes. This is one of the most well-established findings in cognitive psychology, and agent builders keep ignoring it4.

Gist memory preserves the meaning without the details. You remember your high school graduation happened and it was hot outside and your uncle gave a weird toast that somehow involved both Jesus and cryptocurrency5. You don’t remember the exact words, the seating arrangement, or what you ate. The essence survived. The specifics degraded. And that’s fine, because the purpose of that memory is narrative continuity, not forensic reconstruction.

Episodic memory preserves the experience. Sensory details, emotions, temporal context. Your first car accident. The phone call when someone died. The moment you solved a problem that had been haunting you for weeks. These memories are expensive to maintain, and the brain is selective about which experiences get this treatment. Usually: emotionally significant, surprising, or consequential events. Not routine ones.

Semantic memory preserves facts stripped of context. You know that Paris is the capital of France. You almost certainly don’t remember the lesson, the book, or the conversation where you learned it. The original episode is gone. The extracted fact remains, compressed to its essence and integrated into your general knowledge. Your brain ran

rm -rfon the source material and kept the output. Ruthless, efficient, and slightly terrifying when you think about it too long.

Three systems. Three compression ratios. One brain. Millions of years of evolution to get here. And we’re over here trying to replicate it with a single summarize() call. Bold strategy, Cotton.

The gist system runs lossy compression at high ratios. Keep the shape, lose the texture. Good enough for most autobiographical memory, and it costs almost nothing to maintain.

The episodic system runs nearly lossless compression at low ratios. Keep everything. Expensive, limited capacity, reserved for moments that matter.

The semantic system runs extractive compression. Don’t summarize the experience. Extract the fact, discard the wrapper entirely. The most aggressive compression possible, and the most useful for downstream reasoning.

Your brain doesn’t choose one approach. It runs all three simultaneously, routes different information to different systems based on significance, and retrieves from each system differently depending on the current need.

Most agent systems that do any compression at all use a single approach: take the old context, ask a model to summarize it, replace the original with the summary. One pass, one compression ratio, applied uniformly.

This breaks in predictable ways. So predictable, in fact, that I could write a summary of how it breaks, and the irony of that would be lost on exactly the systems we’re discussing.

Decision context gets flattened. “After extensive debugging, we determined the issue was a race condition in the WebSocket handler and fixed it by adding a mutex” is a fine summary. But it loses the reasoning. Why did you suspect the WebSocket handler? What other hypotheses did you eliminate? What did the error look like? If the same bug comes back in a slightly different form, the summary won’t help. The episodic detail would have.

Preferences and patterns get lost. “We discussed the UI and made some changes” erases the nuance that the user hates modals, prefers inline editing, and asked three times for better keyboard shortcuts. These aren’t facts about the UI. They’re facts about the user. They should be extracted as semantic knowledge, not summarized as episodes.

Emotional context evaporates. The user was frustrated when they said “just make it work.” They were excited when they said “let’s try something crazy.” These signals matter for how the agent should respond next time. Uniform summarization strips emotional metadata because it treats all text as informational. It’s like reading the transcript of a fight you had with your partner. The words are there. The tone that would have kept you alive is completely absent6.

Temporal relationships disappear. “This happened after that” and “this was caused by that” get collapsed into a flat summary where everything is equally present-tense. But the order mattered. The causal chain mattered. Compression killed the timeline.

The common thread: uniform compression assumes all information serves the same purpose. It doesn’t. And the mismatches between compression strategy and information type produce errors that are invisible in the moment and expensive later. You don’t notice the problem until you need the thing that got compressed away. By then, your agent is already two paragraphs deep into a confident answer built on vibes.

If one compression ratio doesn’t fit all, what does a multi-strategy approach actually look like? Without getting into full architecture, the principles are clear:

Classify before compressing. Before you summarize anything, you need to know what kind of information it is. Is this a decision? An observation? A preference? An error? A creative idea? A social interaction? Different classifications need different compression strategies. This seems obvious. “Know what you have before you decide what to do with it” is the kind of advice your parents gave you about the fridge. And yet.

Match strategy to purpose. Decisions get episodic treatment (preserve the reasoning, the alternatives considered, the rationale). Facts get semantic treatment (extract the fact, discard the episode). Routine interactions get gist treatment (keep the shape, lose the specifics). Errors get almost lossless treatment (you’ll need the details when it happens again).

Compress at different rates. Not everything needs to be compressed at the same time. Some context is “hot” (actively relevant, should stay full fidelity). Some is “warm” (recently relevant, worth keeping in compressed form). Some is “cold” (not currently relevant, can be aggressively compressed or offloaded to external storage). This mirrors how the brain treats memory consolidation during sleep: recent memories get replayed, strengthened or pruned, and reorganized based on significance.

Preserve the metadata that compression wants to kill. Timestamps. Emotional register. Causal links. Confidence levels. These are the first things that get stripped in a summary and the hardest things to reconstruct later. A good compression strategy preserves metadata even when it compresses content. Think of it like cooking: you can reduce a sauce, but if you boil off all the wine, you just have tomato paste with regret.

Here’s another thought experiment that makes the compression issue concrete. Stay with me, this involves math but the kind where you nod along and trust the conclusion.

An AI agent has had 100 conversations with a user over the past month. Each conversation is about 50 turns. That’s 5,000 turns of interaction, maybe 2 million tokens of raw text. No context window in the world holds all of that.

So, you compress the data. The question is how, which is divided into two distinct approaches: uniform or multi-strategy.

In the uniform approach, you summarize each conversation into a single paragraph. This results in 100 paragraphs, potentially containing 15,000 tokens. While this approach fits the context, it loses the thread. You can’t distinguish between important and routine conversations. For instance, the summary of a life-changing career discussion appears the same length as the summary of a “what’s the weather” exchange, both receiving 150 tokens. This is a form of democracy in action, but it wasn’t requested.

In contrast, the multi-strategy approach offers a more nuanced approach. For the five conversations where major decisions were made, you provide an episodic treatment. This approach preserves the full reasoning chains, resulting in summaries of approximately 2,000 tokens each.

For the 30 conversations about ongoing projects, you employ a gist treatment. This approach focuses on key outcomes and current status, resulting in summaries of approximately 200 tokens each.

For the 20 conversations that were casual chat, you use semantic extraction only. This approach extracts any new preferences, facts, or relationship dynamics, resulting in summaries of approximately 50 tokens each, which are stored as structured facts.

For the 45 routine conversations (quick questions, tool usage, status checks), you employ log-level compression. This approach provides a concise summary, such as “50 tool-use sessions, primary topics: deployment, code review, email,” resulting in a total of approximately 100 tokens.

Same 100 conversations. Dramatically different output. The multi-strategy approach uses fewer total tokens (about 18,000 vs 15,000, surprisingly close) but preserves dramatically more useful information because it allocated fidelity where it mattered. It’s not about using less space. It’s about using the space on the right things. A concept that applies to context windows, suitcases, and apparently not to the way I organize my garage7.

The agent remembers the important stuff in detail and the routine stuff in outline. Like a brain.

Like your brain. You remember yesterday’s breakfast (if it was unusual) or you don’t (if it was the same thing you always eat). That’s not forgetfulness. That’s intelligent compression. The system is working correctly. Your brain decided that your 4,000th bowl of cereal didn’t deserve a full neural trace, and it was right. If anything, the fact that you’re still eating the same cereal is the real problem here, but that’s between you and your therapist.

If you’ve been following this series, a pattern is emerging. The context window is valuable real estate, so stop treating it like a junk drawer. Retrieval needs purpose, not just similarity. This post emphasizes that compression requires strategy, not just summarization.

They’re all pointing at the same thing: the intelligence of an agent system isn’t just in the model. It’s in the pipeline that decides what the model gets to see. The model is the brain. The pipeline is the body8. And we’ve been building brains without bodies. It’s giving “brain in a jar” energy, except the jar is a 500K token context window filled with uncompressed tool output from six tasks ago.

It’s like discovering your self-driving car has one gear. Sure, it technically works. But also, no.

You were never going to finish that book. You haven’t finished a book on vacation since 2017. The Kindle is a prop. Be honest with yourself.

Unless breakfast was a disaster. You will remember the morning you accidentally put salt in your coffee until the heat death of the universe.

Tulving’s (1972) distinction between episodic and semantic memory. Brainerd and Reyna’s fuzzy-trace theory for gist vs. verbatim. Squire’s taxonomy of long-term memory systems. This is textbook cognitive psychology, not cutting-edge speculation. The neuroscience has been here for decades. Agent architecture is just now catching up. As usual, the CS people are speedrunning discoveries that psych figured out during the Carter administration.

Everyone has this uncle. If you don’t have this uncle, you might be this uncle.

Anyone who has ever received a text that just says “fine” knows exactly what I mean. There are fourteen different meanings of “fine” and only one of them is actually fine.

My garage is the physical manifestation of uniform compression. Everything equally accessible, which means nothing is findable. I haven’t seen my leaf blower since October.

You were thinking about robot bodies probably. We are not there yet, focus on this part.