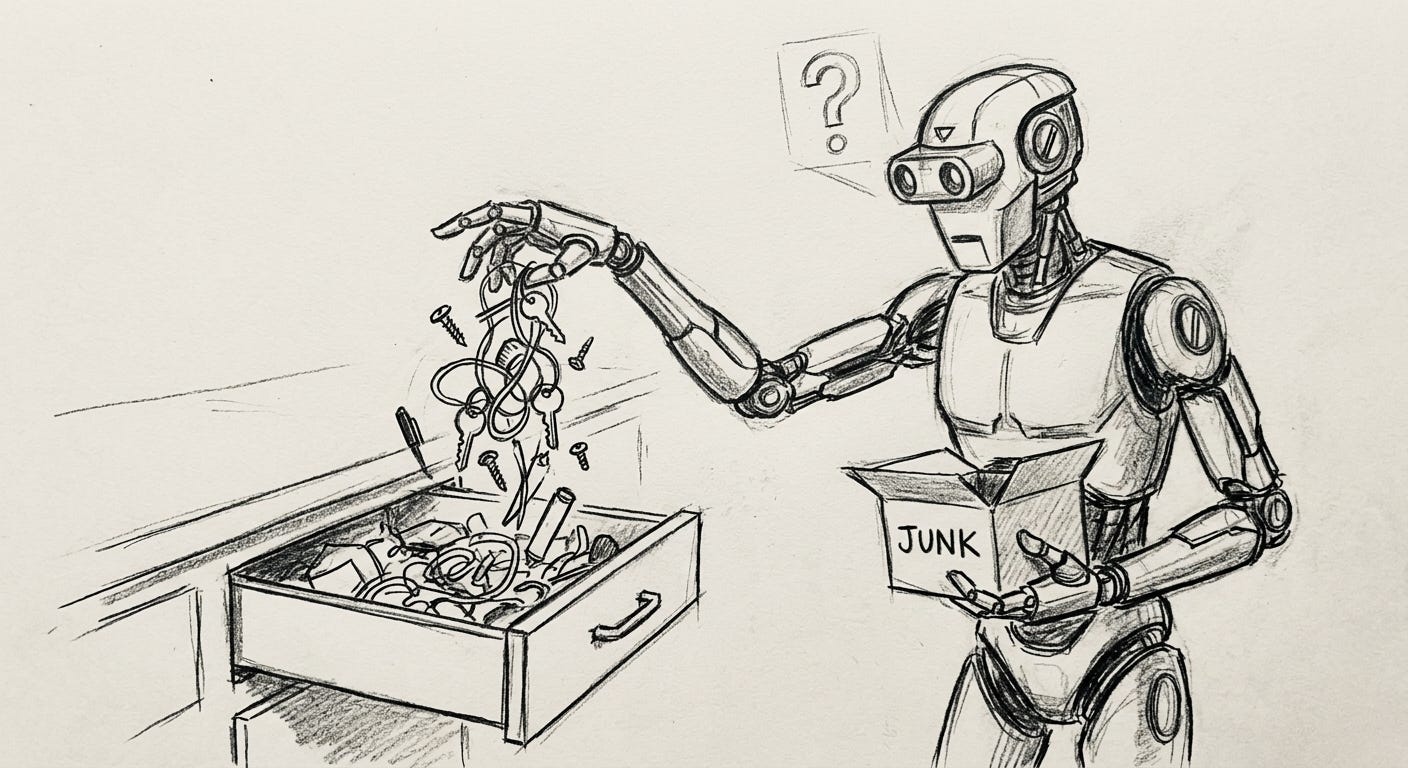

Your Agent’s Context Window Is Not a Junk Drawer

Why the “throw everything in and let the model sort it out” approach is the agent equivalent of studying by highlighting the entire textbook.

Everyone has one. That drawer in the kitchen where you put the thing that doesn’t have a place. Batteries, takeout menus, a screwdriver, three pens that don’t work, two different keys, a warranty card for something you no longer own, and a single AA battery that might be dead.

You know the drawer exists. You know roughly what’s in it. And every time you need something from it, you open it, stare at the chaos, and rummage until you find what you’re looking for or give up and buy a new one.

This is how most AI agents manage their context window.

Every file, every conversation turn, every tool result, every memory hit gets pushed in. The agent gets a massive blob of text and is expected to figure out what matters. And because these models are genuinely smart, they often do figure it out. Which makes the whole thing feel fine.

Until it doesn’t. Until the context fills up and the model starts quietly dropping the thing you actually needed. Until the answer is sitting in a file that got loaded but buried under 40,000 tokens of irrelevant tool output. Until the agent confidently acts on information from three tasks ago because it’s still sitting in the window, unmarked and undifferentiated.

The model is swimming through your junk drawer looking for that one working pen. Sometimes it finds it. Sometimes it grabs the dead one and writes nothing with full confidence.

Here’s the thing that should bother you: your brain doesn’t recall a childhood memory with the same fidelity as what you had for breakfast. That’s not a bug. That’s a feature refined over a few hundred million years of evolution.

You have different memory systems for different purposes1.

Episodic memory stores events with sensory and emotional detail. Your wedding. That car accident. The smell of your grandmother’s kitchen. High fidelity, context-rich, but expensive to maintain and slowly degrading.

Semantic memory stores facts stripped of context. Paris is the capital of France. Water boils at 100°C. You know these things without remembering when or where you learned them. The original episode is gone. The fact remains, compressed to its essence.

Procedural memory stores how to do things. Ride a bike, type on a keyboard, parallel park. You can’t easily articulate the knowledge, but your hands know.

Working memory is the tiny scratch pad where you actually think. It holds maybe 7 items (plus or minus 2, if you want the classic Miller number). Everything you’re actively reasoning about lives here. Everything else is in storage, waiting to be retrieved.

The brain runs multiple memory systems because one compression ratio doesn’t fit all tasks. A childhood birthday party and the periodic table serve completely different cognitive purposes. Storing them the same way would be insane.

And yet.

Most agent architectures dump everything into a single flat In this context, the window of context is maintained at uniform fidelity. Your agent’s identity document carries the same weight as the output of a tool call made six turns ago. A memory search result about a project decision made last month is presented alongside the raw JSON data from an API call made thirty seconds ago. There is no hierarchy, prioritization, or decay in this system.

It’s the cognitive equivalent of a student who highlights every line in the textbook. They technically “studied” and functionally learned nothing.

The frustrating part is that this failure mode is quiet. The agent doesn’t crash. It doesn’t throw an error. It just gets slightly worse.

You notice it as inconsistency. The agent was great yesterday and mediocre today. It nailed the first task and fumbled the third. It gave a brilliant answer and then immediately contradicted itself. You blame the model, or the prompt, or the temperature setting. You tweak things. Sometimes it helps. Usually you’re just rearranging deck chairs.

What actually transpired was that the context became cluttered. The signal-to-noise ratio in the window deteriorated as the session progressed. Initially, during a conversation, the window is clean, with a fresh system prompt and dominant relevant context. Yet, by turn 20, the window has transformed into a junk drawer, containing old tool results, resolved tangents, and abandoned threads that never got cleaned up. Consequently, the model dedicates its attention to all of this because it lacks the ability to discern what remains pertinent.

This is the real cost of treating the context window as a junk drawer: not catastrophic failure, but the slow, invisible erosion of quality that you can’t debug because there’s no stack trace for “the model got confused by irrelevant context.”

So if one big flat window is the wrong model, what’s the right one?

Start with a question that most agent frameworks never ask: what does the model actually need to know right now?

Not “what might be relevant” nor “what’s available.” What is necessary for this specific task, at this specific moment, given what the agent is trying to do?

This is a fundamentally different question. It shifts the work from the model (figure out what matters from this pile) to the system (give the model only what matters). It’s the difference between dumping the entire filing cabinet on someone’s desk versus handing them the three folders they need.

Think about how you’d brief a new contractor on a project. You wouldn’t hand them every Slack message, every commit, every design doc, and every meeting recording from the past six months. You’d give them:

What the project is (high-level context)

What’s been decided (key decisions, constraints)

What they’re working on right now (the immediate task)

Where to find more detail if they need it (references, not content)

That’s four layers. Progressively higher detail as you get closer to the current task. The distant stuff is compressed to summaries. The recent stuff is rich. The current task gets everything.

This is how humans naturally share context. We triage. We summarize. We compress the old and expand the new. We don’t dump raw data on people and hope they figure it out.

Your agent deserves the same courtesy.

“Fine,” you say. “I’ll just summarize old context and keep the window clean.”

Whatever, sure. But which summarization goes in there? For what purpose?

This is where it gets interesting. The same conversation, summarized for different purposes, looks completely different.

Imagine a 50-turn conversation where you and your agent debugged a deployment issue. If you summarize that for future debugging, you want the root cause, the fix, and the gotchas. If you summarize it for project status, you want “deployment fixed, took 2 hours, watch for X.” If you summarize it for the agent’s own memory, you want “Joe prefers to check logs first, the staging environment has a known DNS issue.”

Three completely different summaries. Same conversation. Because the right compression depends on what you’re compressing for.

This is the thing that static RAG and “just stuff it in the window” approaches miss entirely. Retrieval without purpose is just search. You need to know why you’re retrieving before you can know what to retrieve and how to compress it.

One compression ratio doesn’t fit all tasks.

I’m not going to lay out a full architecture here2. But the implications point in a clear direction:

Context assembly should be a first-class engineering problem. Not an afterthought. Not “load the system prompt and hope for the best.” The pipeline that decides what goes into the context window matters more than the model that processes it. A mediocre model with perfect context will outperform a frontier model swimming through noise.

Different information needs different treatment. Identity and personality? Always present, highly compressed. Recent conversation? Full fidelity, but with expiration. Old decisions? Summary form, retrievable on demand. Tool results? Consumed and compressed immediately, not left raw in the window.

The system, not the model, should do the filtering. Asking the model to figure out what’s relevant from a pile of context is using your most expensive, most capable component for janitorial work. That’s like hiring a surgeon to also do intake paperwork.

Memory is a behavior, not a feature. It’s not enough to store things. You have to know when to retrieve, what fidelity to retrieve at, and how to integrate the retrieved context with what’s already in the window. That’s not a database problem, it’s a cognitive architecture problem.

Your agent isn’t dumb. It’s drowning.

The context window is the most valuable real estate in your entire system. Every token in it competes for the model’s attention. Every irrelevant byte is a tax on quality. Every stale result from three tasks ago is a trap waiting to mislead.

Stop treating it like a junk drawer. Start treating it like what it is: the only thing your agent can see, the lens through which every decision gets made.

What you put in front of that lens changes everything.

If you’re a psych nerd: yes, I’m simplifying. Tulving’s taxonomy, Squire’s declarative/nondeclarative split, the debates about whether episodic and semantic are actually distinct systems. The point isn’t neuroanatomical precision. The point is that biological cognition figured out a long time ago that one storage format doesn’t work.

I have thoughts. Several of them but this is for another day. Stay tuned though.