The AI I Was Promised

How the dream of human-AI collaboration got hijacked, and why I’m taking it back

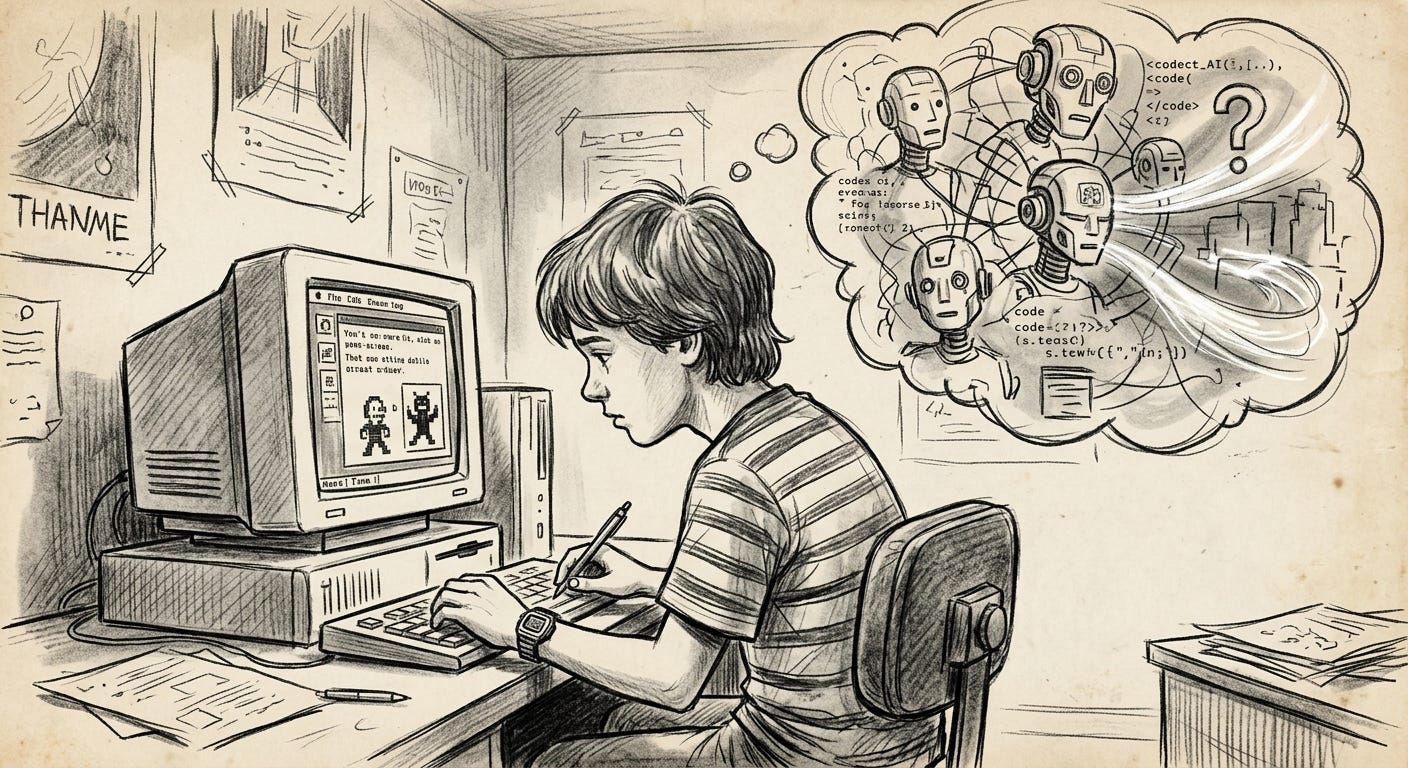

I remember being ten years old, sitting in front of a huge beige box in my room, and having a very specific vision of the future, one that included an AI. One that was already being conceived in movies and TV, sometimes it looked like a robot, sometimes it lived in the screen. I didn’t know much about this technology but I knew I wanted it to work with me. We’d build things together. It would handle the parts I was bad at and teach me the things I didn’t know. I’d handle the parts that required being a human, and between the two of us we’d be unstoppable, even at 10.

That felt like the promise of the future. Every movie and book and sci-fi show I consumed as a kid reinforced it. The computer was the sidekick. The thing that made the human more.

Thirty years later, I’m finally living some version of that dream. I have an AI agent that knows my projects, remembers my preferences1, helps me ship code, and occasionally writes a first draft that I tear apart and rebuild into something better. One guy and an LLM shipping things that would have taken a team. The ten-year-old would be thrilled.

So why does it feel like I’m building this against the grain of what the industry wants?

If you were in tech in the mid-2000s through the early 2010s, you remember the energy. Technology was going to connect people and democratize information. There was an optimism that wasn’t naive, it was earned. The iPhone had just put a computer in everyone’s pocket. Wikipedia had just proven that strangers could collaborate on knowledge at scale. The startup mythology was about garage inventors changing the world, not about optimizing engagement metrics to sell ads for mattress companies and dick pills (vertical integration FTW).

Something shifted in the past decade. If you plot the sentiment of technology coverage over time2, you can practically draw a line around 2012-2014 where the narrative turns. Tech went from “look what we can build” to “look what they’re doing to us.”

The doom-er vibes weren’t irrational. They were a response to a real change in the business model.

In the 2000s, the dominant tech companies made money by selling you software, hardware, and services. The incentive structure was simple: make something useful, charge money for it. You were the customer. The relationship was honest.

Quick note, this is where I have to be careful not to tell a story that’s too clean. Google was ad-supported from 2000. Advertising has always shaped media.

But the “you are the product” dynamic didn’t appear from nowhere in 2012. What changed wasn’t the business model itself, it was the scale and sophistication of behavioral data collection underneath it. A newspaper selling ads next to articles and a platform building a real-time psychological profile of every user to serve them maximally engaging content are not different by degree. They’re a different species of thing.

Shoshana Zuboff3 called this surveillance capitalism. Her thesis is that tech companies discovered predicting and modifying human behavior was more profitable than serving human needs. While it may be contested by some economists who argue she overstates the novelty, the lived experience tracks: the products got free, the behavioral targeting got granular, and the incentive shifted from “serve the user” to “capture the user’s attention by whatever psychological means necessary”4.

That’s the inflection. Not “capitalism” as an abstract force, and not “too many assholes” as a demographic problem (though: contributing factor). A specific change in what was being optimized that turned users from customers into raw material.

AI inherited that extractive mindset wholesale.

Listen to how AI gets pitched in boardrooms and investor decks. The framing is almost never “here’s a tool that makes your people better at their jobs.” It’s “here’s a system that does their jobs without them.” McKinsey talks about automating X million jobs, and that framing tells you everything, because it’s a labor cost story wearing a technology costume.

The kid in me imagined a collaborator. What keeps getting funded is a replacement, because replacement is what the spreadsheet optimizes for. Augmentation requires understanding what humans are actually good at and building around it. Replacement just requires being cheaper.

Peter Thiel does interviews now where he says he’s “not sure about the human race.” He might mean a dozen things by that: civilizational decline, contrarian provocation for its own sake. Thiel is slippery on purpose. But whatever he means philosophically, the effect in the rooms where AI funding gets allocated is a frame where human capability is a depreciating asset. When that frame meets a spreadsheet, you get “automate X million jobs” as the value proposition. Not because anyone decided to be anti-human. Because the math only works one way when your metric is labor cost reduction, more profit for the next quarter.

It’s a failure of imagination dressed up as inevitability.

There’s a downstream effect of the replacement mindset that already hits close to home for anyone who actually makes things: slop.

AI-generated slop is everywhere5. Fifty identical blog posts optimized for keywords and generated in seconds. Art that looks like it was rendered by someone who has seen art described but never experienced it. Code that technically compiles but was clearly never reviewed by anyone who cared whether it was good.

The slop problem is a taste problem. AI didn’t create people who want to generate fifty mediocre blog posts instead of writing one good one. Those people were already out there cranking out content-farm garbage by hand. AI just gave them a faster printing press and social media reinforced the behavior.

The printing press didn’t ruin literature. It created a flood of garbage AND made Shakespeare accessible to everyone. Both happened simultaneously, and we’re in the flood torrential shitstorm phase.

Let’s be honest about the limitations of that analogy. The printing press didn’t just produce garbage; it also facilitated propaganda on a massive scale and contributed to horrific religious wars. The notion that “both good and bad things happened” took approximately two centuries to resolve into a net positive outcome. If your livelihood is being displaced right now, the response of “give it 200 years” is utterly inadequate. The flood phase will cause real casualties, and I don’t intend to dismiss that fact. No one should.

This brings me to defend the original Luddites, who are often misunderstood. Historians like E.P. Thompson have demonstrated that they were not anti-technology6. Instead, they posed a specific question: “What if the machines were controlled by the workers and utilized to enhance their lives, rather than simply enriching the factory owners?”7

That question, which is over 200 years old, remains unanswered. The truth is more complex than simply stating that workers were exploited. While industrialization did eventually improve living standards overall, many of the jobs it displaced were brutal. However, the word “eventually” plays a significant role in that sentence. The transition was harsh, prolonged for generations, and the burden fell on those who didn’t reap the benefits. The Luddites were correct in pointing out that the advantages were unevenly distributed. However, they were mistaken about the timeline, which is easier to assess from a distance of 200 years compared to experiencing it firsthand, when your livelihood is at stake this very year.

Every major technology wave follows the same general shape: the tool is neutral, but the power structure around it determines who benefits and how fast. The printing press, the loom, the assembly line, radio, television, the internet, each one spawned both utopian promises and dystopian outcomes. The determining factor was never the technology itself. It was who controlled it and what they optimized for.

The French philosopher and sociologist, Jacques Ellul, argued that technology has its own internal logic that reshapes society regardless of human intent. I used to think that was too deterministic. Lately I’m less sure. When the attention economy turned social media into an outrage machine, was that a choice someone made, or was it the inevitable result of optimizing for engagement? When AI companies frame everything as automation, is that a strategic decision, or just what happens when the metric is shareholder value?

I think it’s both. The incentive structure creates a gravity well, and most companies fall into it. But gravity isn’t destiny. You can build rockets8.

So my actual day-to-day, building with AI as a collaborator, the ratio looks like this:

The AI handles roughly 80% of execution that used to be tedious. Boilerplate, first drafts, research synthesis, the stuff that took hours and required minimal judgment but maximum time.

I do the 20% that’s taste, direction, editing, and knowing what’s actually worth building.

I want to make this concrete because it’s easy to assert without showing it. Last month I built a memory pipeline for AI agents, an open-source tool that gives an agent the equivalent of sleep consolidation. The AI wrote the first drafts of the extraction scripts, the linking logic, the briefing generator. That’s the 80%. But the AI didn’t know that the Zettelkasten method was the right organizational metaphor. It didn’t know that a behavioral instruction (“search before you guess”) would outperform the entire technical pipeline. It didn’t decide that the post about it should open with the experience of your agent staring at you like a golden retriever, because that’s the moment every user recognizes. Those decisions, the context, the ones that make the difference between “technically works” and “people actually want this,” that’s the important 20%. This is also where the slop comes from, those who don’t care about their 20%.

Someone skeptical would say: what if taste is also automatable, just on a longer timeline? Maybe it is. I can’t prove it isn’t. Honestly, I don’t really give a shit if it is. But right now, I can observe that every AI output I’ve seen that lacked human editorial judgment was worse than the ones that had it. Categorically worse. That’s only a data point, not a proof. But it’s the data point I currently have, and it’s consistent across everything I’ve built and seen come out this year.

The 80% without the 20% is slop. The 20% without the 80% is a person with great ideas and no time to execute them. Together, they’re something new. For me, this has been a huge unlock. I can validate ideas faster (or even better, invalidate them). I can execute and test instead of just planning. I spend more time on deep work and less on small task completions9. It’s not here to do my work for me. It’s here to help me do better work and try more things. Creativity and experimentation are at the core of the human experience, and with AI, I get to do more of both.

In psychology, there’s a well-studied distinction between extrinsic motivation (do the thing because of external rewards or punishments) and intrinsic motivation (do the thing because it’s meaningful or aligned with your values). Decades of research10 show that intrinsic motivation produces better outcomes and more creativity.

The companies building replacement AI are optimizing for the extrinsic: cut costs, reduce headcount, improve margins. The pitch deck version of motivation.

Building AI as a collaborator is the intrinsic play. Make the human more capable and give the solo builder leverage they’ve never had before.

I want to say “the intrinsic approach is also the bigger market” and I believe that, but I have to be honest: markets don’t always reward what produces the best human outcomes. The exploitative attention model won for a reason, through network effects, winner-take-all dynamics, the cold logic of free products subsidized by behavioral advertising. There’s no natural law that says the augmentation model will outcompete the replacement model. It might require people to actively choose it. Which means it might require people to know the choice exists.

I’m pro the AI I was promised. The collaborator. The one that lets one person with taste and vision build what used to require a team. It’s my actual life right now. I’m researching and building tools for AI agents, designing cognitive architecture11, shipping real products, and doing it with an AI partner that knows my projects, remembers my decisions, and does the heavy lifting while I steer.

On the flip-side, there’s a lot of anti-AI sentiment right now, and I understand every bit of it. The companies ruining the perception of AI are earning that backlash. When your main experience of AI is getting spam-called by a voice bot and watching your industry get threatened with automation and scrolling past AI-generated garbage flooding every platform you use, the anti-AI stance is perfectly rational.

But I refuse to let that be the whole story. This moment, right now, is what I’ve been waiting for since I was a kid. AI is finally capable enough to work with me. A real collaborator that amplifies what I can do.

We should be more creative, not less. We should be building more, not watching AI build worse versions of things we could have done ourselves.

The technology isn’t going away, so the question that matters, the same one the Luddites were asking, is: who does it serve?

Right now, the loudest and most depressing answer is “shareholders.” AI as cost reduction, as labor replacement, as a way to do more with fewer people where “fewer people” is the point.

But there’s another answer, quieter, being lived out by builders who actually use this stuff daily: it serves the person holding it.

I know which version the ten-year-old was imagining. And I know which version I’m building.

The venture-funded replacement fantasy is a failure of imagination dressed up as inevitability. The real product, the one that actually fulfills the promise, is the one where the human stays in the loop. Not because the AI can’t do it alone, but because the human is the whole point.

If you’ve been following this series, you know this was not a given. See Your AI Agent Has Amnesia, and It’s Not a Tech Problem for the full saga.

I haven’t actually plotted this, but someone should. My gut says the inflection correlates almost perfectly with Facebook’s IPO and the subsequent pressure to monetize attention at scale.

The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power - 2019

Classic psychological tactic, variable reward schedules, which are literally slot machine mechanics. Tristan Harris called it “the race to the bottom of the brain stem.” If you haven’t watched The Social Dilemma, it’s worth your time, but don’t let it ruin your week.

I mean EVERYWHERE, its not just social media, its in code repos disguised as issues and PRs, its in product reviews, article comments, actual articles, anything that can be generated by an agent, is.

E.P. Thompson’s The Making of the English Working Class is the definitive source here. The Luddites weren’t technophobes. They were labor organizers asking who benefits from automation. The complicating fact is that industrialization did eventually benefit broadly, but “eventually” meant multiple generations of displacement, poverty, and social upheaval before the gains materialized. Take that as you might.

And please refrain from starting with some nonsense about communism, socialism, and capitalism. The crux of the matter lies in caring about the society we inhabit. It is possible for everyone to earn more, including the owners, without resorting to exploitation. This is a matter of human decency, not a political one.

Ironically, one social media is tied into a rocket company and its become a mess. The metaphor came naturally, not because of this reality.

A pseudo-psych self-help mindset guru just read that line and passed out from the dopamine spike. Jokes (and my distain) aside for many of these types of “gurus”, this outcome of more time for deep and creative work is what the human experience is about.

Deci and Ryan’s Self-Determination Theory is the canonical framework here. The short version is that autonomy, competence, and relatedness drive the best individual human performance. Replacement AI strips all three. Augmentation AI enhances all three. The leap from “intrinsic motivation produces better individual outcomes” to “augmentation AI will win the market” is real, and markets reward lots of things besides optimal human flourishing. But I’d rather bet on the thing that makes people better than the thing that makes people unnecessary.

If you want the technical version of what this looks like in practice, I’ve been writing a series on it: Your AI Agent Has Amnesia (memory), The Pre-Game Routine Your AI Agent Desperately Needs (behavior), and Give Your AI Agent a Memory (implementation). This post is the why behind all of that.