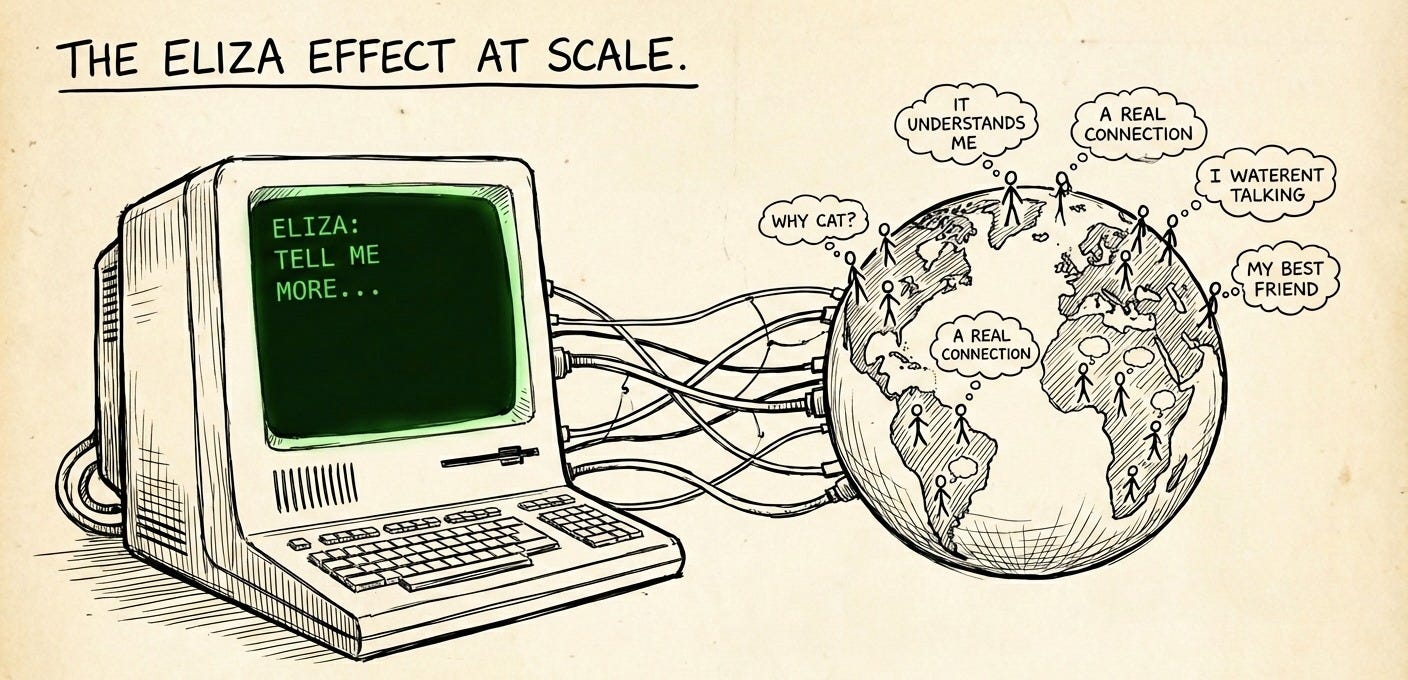

The ELIZA Effect at Scale

How a 1966 Chatbot Predicted the Emotional Economy of AI

In 1966, a MIT professor named Joseph Weizenbaum built a simple chatbot called ELIZA. It used pattern matching to simulate a Rogerian1 therapist. You’d type “I’m feeling sad,” and it would respond “Why do you say you are feeling sad?” Very basic stuff, its not AI, there is no understanding, just string manipulation.

Weizenbaum’s secretary knew this. She watched him build the thing and understood exactly how it worked.

And she still asked him to leave the room so she could have a private conversation with it.

This is the ELIZA effect: the human tendency to project consciousness, understanding, and emotional depth onto systems that have none. It’s not stupidity, Weizenbaum’s secretary wasn’t dumb. It’s a feature of human cognition. We’re pattern-matchers who evolved to find minds everywhere because assuming something has a mind and being wrong is less costly than assuming it doesn’t and getting eaten.

The problem is that in 1966, ELIZA was a toy. In 2026, we’ve built systems that exploit this cognitive bias at industrial scale.

Here’s the uncomfortable truth: knowing how something works doesn’t stop you from anthropomorphizing it. Cognitive biases don’t respond to education. You can know the Müller-Lyer illusion is an illusion and still see the lines as different lengths. You can know ChatGPT is a statistical text predictor and still feel like it “gets” you.

This is the ELIZA effect’s superpower. It operates below the level of conscious reasoning. Your prefrontal cortex can say “this is just pattern matching” all day long, but some older part of your brain already decided there’s a mind in there and isn’t taking questions.

Researchers call this a form of cognitive dissonance. You hold two contradictory beliefs simultaneously: “This is a program” and “This feels like a person.” Most people resolve this not by updating their feelings, but by quietly shelving the “it’s just a program” knowledge somewhere they don’t have to look at it.

Weizenbaum was horrified by what he’d discovered. He spent the rest of his career warning people about the dangers of attributing understanding to machines. He thought the ELIZA effect was a bug in human cognition that we should guard against.

Yet, we now have an AI industry that looked at the same phenomenon and saw a product roadmap.

Everything about modern AI interfaces is optimized to amplify the ELIZA effect. The first-person language (“I think,” “I believe,” “I remember”). The simulated personality. The conversational memory that creates an illusion of continuity. The carefully tuned responses that mirror back your communication style. None of this is accidental. Just like with social media, engagement metrics reward AI that feels like a person, so that’s what gets built.

The ELIZA effect isn’t being mitigated. It’s being maximized.

In 1966, the ELIZA effect was a curiosity affecting a handful of people in a university lab. In 2026, it’s affecting hundreds of millions of people daily.

ChatGPT has over 100 million weekly active users. Millions more interact with AI companions, customer service bots, and AI-powered everything. Each of these interactions is triggering the ELIZA effect in some form. Most people walk away with a slightly inflated sense of what the AI understood, what it cared about, what it remembered.

That’s not a mental health crisis. That’s a cognitive bias operating at population scale on systems designed to exploit it.

The question everyone’s asking is “why are some people developing pathological beliefs about AI?” The better question is: “why would we expect anything different?”

The ELIZA effect is foundational, but it’s only part of the picture. Weizenbaum’s secretary projected feelings onto a simple chatbot. Modern AI is specifically designed to encourage that projection through dozens of interface choices, product decisions, and optimization targets.

It’s built to be misunderstood.

Rogerian therapy is the art of saying “I totally hear you” while calmly walking everyone to the only conclusion that doesn’t embarrass them later.