The Pre-Game Routine Your AI Agent Desperately Needs

Performance psychology meets agent architecture: why the smartest agent in the room still chokes under pressure, and what to do about it.

NOTE: this is the 2nd in a series I’m writing on memory and AI agents, the first can be found here.

The Modern Agent Failure

Your agent is smart. It can write code, search the web, parse documents, call APIs. It has access to a hundred tools and a context window measured in hundreds of thousands of tokens.

And yet, like most things in modern times, it is inconsistent.

Same task, same tools, wildly different outcomes depending on what happened to be in context, which memory files got loaded, how the previous tool result bloated the conversation, whether the system prompt got clobbered by a mid-run correction someone injected three turns ago. This is all with the assumption you are using a frontier model.

The failure mode isn’t intelligence. It’s consistency. The agent is being asked to think and perform at the same time. It’s asked to design its approach, retrieve context, self-correct, execute tools, and synthesize results all within a single undifferentiated loop. That’s like asking a surgeon to simultaneously diagnose the patient, plan the operation, prep the instruments, and perform the surgery while someone shouts revised instructions from the gallery.

The problem isn’t capability. It’s the absence of a routine.

Two Modes: Purposeful Thinking vs. Reactive Execution

Performance psychology has a model for this that athletes, surgeons, fighter pilots, and competitive gamers all use, whether they know the formal name or not.

Purposeful Thinking is the preparation phase. Situational awareness. Review the game film. Check the conditions. Run the mental checklist. This is where you identify the task, gather what you need, set constraints, and commit to a plan. It happens before the whistle blows.

Reactive Execution is game time. You’re running trained sequences. Muscle memory. The basketball player doesn’t think about elbow angle during the free throw, they practiced that a thousand times. The pilot doesn’t re-derive the physics of lift during takeoff. They follow the procedure.

Here’s the trick, you do not want “thinking” during execution, not mid-swing coaching. The only exception is genuine exception handling, something went wrong that the routine didn’t anticipate. In that case, you stop, re-enter purposeful thinking mode, re-plan, then resume execution.

This isn’t anti-intellectual. It’s the opposite. It’s so intellectual that you front-load all the intelligence into preparation, so execution can be boring and deterministic. The best human performances look effortless precisely because the thinking and preparation already happened.

Every great performer has a pre-game routine and preparation. Your agent doesn’t.

Let’s fix that.

What follows is a concrete architecture for giving your agent the same structural advantage that athletes, pilots, surgeons, and racing drivers have relied on for decades. (I implemented this as an OpenClaw skill; more on that in the next post.)

Translating the Metaphor Into Architecture

Before we write code, let’s define terms in agent-land:

Session context: what the agent sees right now. System prompt, conversation history, tool results. Ephemeral. Gone when the session ends (or when compaction eats it).

Durable memory: files on disk. Notes, identity docs, project context, after-action reviews. Survives across sessions. Must be explicitly loaded.

Retrieval vs. injection: retrieval means the agent decides to go looking for something. Injection means we push the right context into the agent before it starts. Injection is the pre-game routine; retrieval is the mid-game improvisation.

Skills/tools as deterministic operators: a tool call should be a trained sequence. Input goes in, output comes out. The agent doesn’t need to understand the tool’s internals but absolutely it needs to know when to use it and what to expect back.

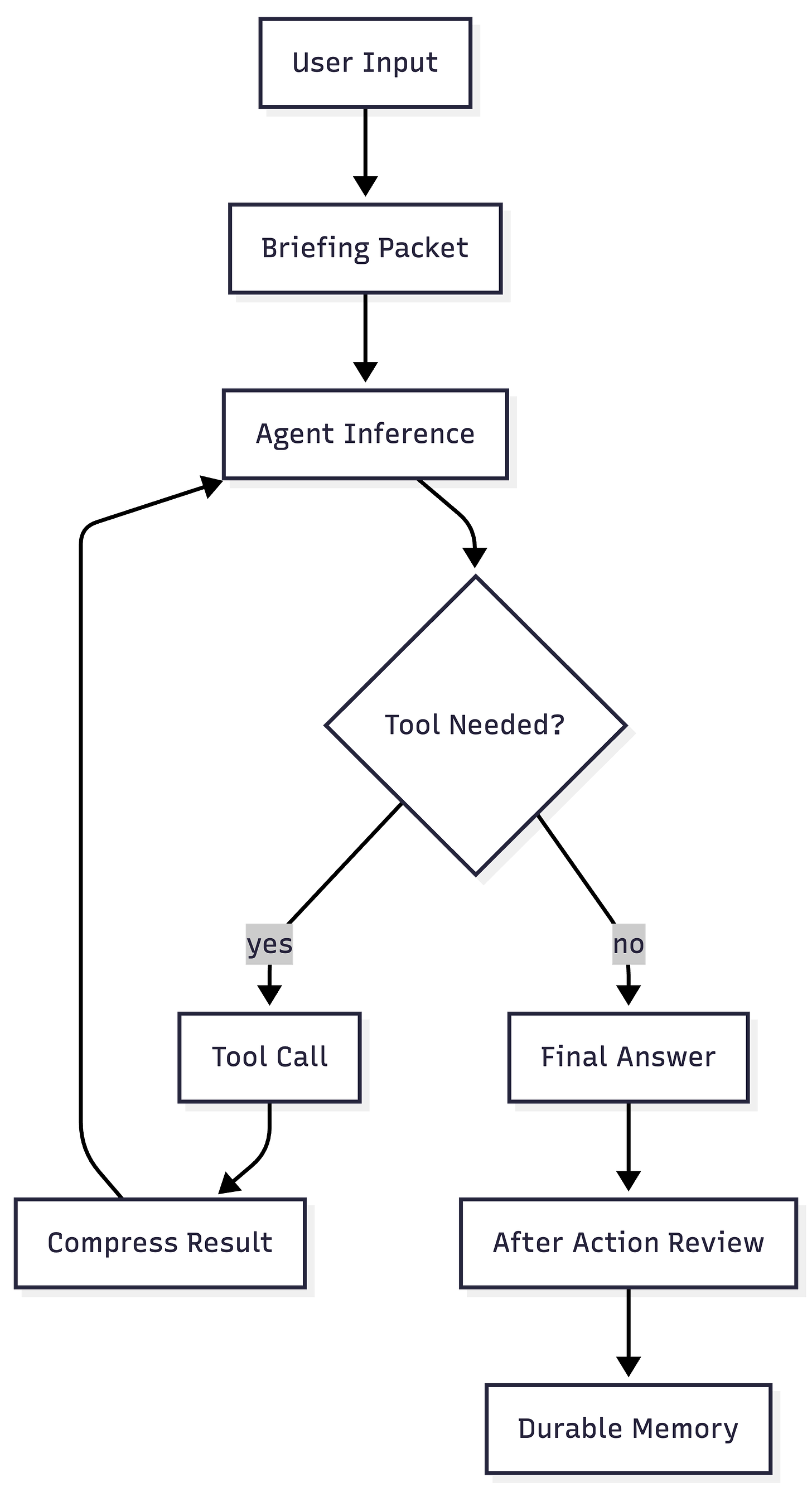

The core execution loop looks like this:

Now let’s name the failure modes this structure prevents:

Token bloat: a single

read_fileon a large file dumps 50K characters into conversation history. Every subsequent inference pays the cost. The agent gets slower, dumber, more expensive.Instruction collision: three different system prompt fragments all say slightly different things about how to handle errors. The agent picks one. Sometimes the wrong one.

Stale memory: the agent loaded a project doc from six months ago because it was in the default config. Now it’s confidently executing against outdated requirements.

Tool result spam: the agent called

web_searchand got 10 results, each with 2K of snippet text. Twenty thousand characters of search results now dominate the context, pushing the actual task instructions out of effective attention range.

Every one of these is a preparation failure, not an intelligence failure. The agent had the wrong context, too much context, or stale context when it started executing.

The Briefing Packet Pattern

The briefing packet is the centerpiece. It gets built in before_agent_start and injected into the system prompt before the agent sees the user’s message. Here’s what goes into it:

1. Task restatement. A one-sentence summary of what the agent is about to do. This anchors the entire run.

2. Checklist. A short list of discipline reminders:

- Restate the task in one sentence.

- List constraints and success criteria.

- Retrieve only the minimum relevant memory.

- Prefer tools over guessing when facts matter.

3. Retrieved durable memory (bounded). Not the whole workspace. Not every memory file. A curated, bounded set of files that the config says are relevant. Truncated to a character limit.

The implementation is simple by design:

export function buildBriefingPacket(opts: {

checklist: string[];

memoryText: string;

maxChars: number;

taskHint: string;

}) {

const header =

`# Pre-Game Routine\n` +

`Task hint: ${truncate(opts.taskHint, 240)}\n\n` +

`## Checklist\n` +

opts.checklist.map((x) => `- ${x}`).join("\n") +

`\n\n## Retrieved Memory (bounded)\n`;

const body = truncate(

opts.memoryText,

Math.max(0, opts.maxChars - header.length)

);

return truncate(header + body, opts.maxChars);

}

The maxChars default is 6,000. That’s intentional. The briefing packet should be a page, not a novel. If you need more than 6K characters to brief your agent, you’re not briefing, you’re turbo dumping. The discipline is in the bounding.

The memory files are loaded resiliently, missing files don’t crash the routine, they’re silently skipped. This matters because an agent’s workspace is a living thing. Files come and go. The routine has to be more resilient than the environment it runs in.

The “No Mid-Swing Coaching” Rule

Here’s where most agent setups go wrong: they try to correct the agent during execution.

The user notices the agent is doing something slightly off. They inject a correction. “Actually, use the other API.” “Wait, I forgot to mention the format should be JSON, not YAML.” The system prompt gets patched mid-run. A new instruction appears in conversation history.

This is mid-swing coaching. And it’s devastating.

In performance psychology, there’s extensive research on what happens when you introduce conscious correction during a trained sequence. The athlete “chokes.” The musician loses the phrase. The performer starts second-guessing every micro-decision, and the overall quality drops precipitously. The corrective information is accurate but the problem is the timing.

For agents, the mechanism is different but the outcome is identical. Mid-run corrections create instruction collision. The agent now has two competing directives: the original and the correction. It has to spend inference capacity figuring out which one to follow, when they conflict, how to reconcile them. That’s capacity it’s not spending on the actual task.

The alternative:

Correction log. Capture the correction, but don’t inject it into the current run. Write it to a file.

Condensed deltas. At the end of the run (or before the next one), condense all corrections into a single, non-contradictory update.

Next-run injection. The next briefing packet includes the corrected instructions. The agent executes against a clean, updated routine.

This is how military after-action reviews work. You don’t stop the patrol to rewrite the operations order. You complete the mission, debrief, update the SOP, and execute the updated SOP next time. It’s also how elite sports coaching works, the coach takes notes during the game, but delivers the feedback in the film room, not while the player is running a route.

The agent_end hook in the plugin writes an after-action review to durable memory. That review is available for the next run’s briefing packet to incorporate. The feedback loop is closed but not during execution.

What to Measure

You built the routine. Now how do you know it’s working?

Median tokens per run (before/after). This is the headline metric. If the briefing packet and tool compression are working, the agent should use fewer tokens per successful task, not more. The briefing adds tokens up front but prevents the sprawl that comes from mid-run context gathering.

Tool calls per successful task. A well-briefed agent should need fewer tool calls because it starts with the right context already loaded. If tool calls go up, your briefing is missing something the agent keeps having to look up.

Retry rate. How often does a task need to be re-run because the first attempt failed or produced wrong output? The whole point of the routine is consistency. Retry rate is the direct measure.

Compaction frequency. If context compaction is firing less often, it means the conversation is staying within bounds. Tool result compression directly affects this.

Instruction churn. Count the number of competing directives injected per run, this includes system prompt fragments, mid-run corrections, tool-injected instructions. This should trend toward one: the briefing packet. Everything else should be stable and non-contradictory.

Don’t measure everything at once. Start with median tokens and retry rate. Those two numbers will tell you if the routine is helping or just adding overhead.

One more metric worth tracking over time: after-action review density. Are the bullets in your after-action log getting more specific and actionable over successive runs? That’s the signal that the feedback loop is tightening where the routine is learning from itself. If the reviews are all “Completed run. No durable notes extracted,” the routine is running but not learning. Tune the briefing, tighten the checklist, give the agent better raw material to reflect on.

Memory Is a Behavior, Not a Capacity

The common framing for agent memory is about capacity. How many tokens can we fit? How much can we retrieve? How big is the vector store? How many embeddings can we cram into the retrieval index?

That’s the wrong frame. Memory isn’t about how much you can hold, it’s about what you load, when you load it, and what you do with the results. Memory is a behavior. A discipline. A routine. The agent with 200K tokens of context that loads the wrong 200K will underperform the agent with 8K tokens of precisely the right context every single time.

Performance is the same way. It’s not about raw capability. It doesn’t matter which model it is, GPT-5, Claude, Gemini, they’re all smart enough. The gap between a mediocre agent and a great one isn’t the model. It’s the preparation. It’s whether the agent starts each run with a clean, bounded, relevant context or whether it starts with whatever happened to be lying around from the last session.

The saying is performance is preparation plus execution but for the bot, performance is preparation plus automation. Front-load the thinking into the briefing. Automate the discipline with hooks. Make execution boring and deterministic. Write the after-action review so the next run starts even better.

Stop injecting more. Start injecting better and earlier.

Give your agent a routine. Make it boring. Make it consistent. Make it automatic.

Then watch what happens when the smart model finally has a stable foundation to be smart on top of.