Why Your AI Agent Ignores Its Own Instructions

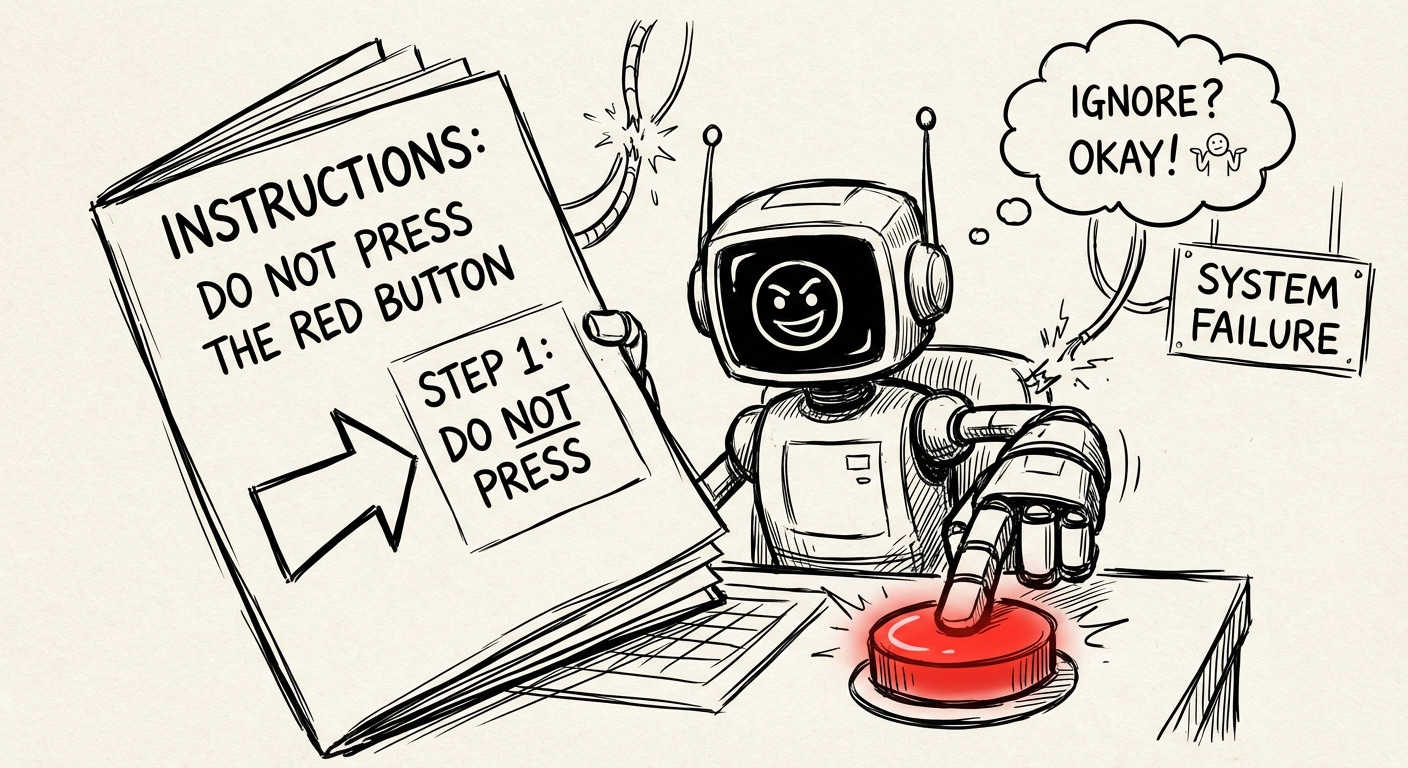

My AI agent follows directions exactly as well as my kids do

This is part of a series on AI agent architecture. Previously: AI Memory Systems, Pre-Game Routines, Why Your Agent Deserves Its Own Email.

I recently wrote a 2,000-word document explaining exactly how my AI agent should manage its memory. Checkpoint frequently. Search before answering questions about past work. Write breadcrumbs before context gets compacted. Simple stuff.

The agent read it, understood it, and then it ignored it anyway.

This kept happening. It wasn’t a lack of storage but I’d review transcripts and find obvious moments where the agent should have checkpointed but didn’t. Questions about past projects answered from vibes (READ: hallucinations) instead of notes. Worst of all, context would be lost after compaction because the agent never bothered to save anything. It didn’t matter how could memory management was.

The frustrating part: the agent could explain perfectly well why checkpointing matters. It just didn’t do it.

Instructions Don’t Work

There’s a concept in psychology called the intention-action gap. People know they should exercise, save money, eat better. They genuinely intend to. And then they don’t.

The same thing happens with AI agents. Your AGENTS.md can say “always read your daily notes” and the agent will agree that’s a good idea. But when a user asks a question and the agent is 3,000 tokens into a response, it won’t stop to read notes. It’ll answer from context and/or hallucinate.

This happens for a few reasons:

Attention decay. Instructions at the start of context get weaker as the context window fills up. By the time an agent has been working for a while, the “remember to checkpoint!” instruction is buried under thousands of tokens of conversation. It’s still technically there. The model just isn’t attending to it anymore.

No enforcement mechanism. There’s no consequence for skipping a checkpoint. The agent doesn’t feel pain when it fails to save context before compaction. It just wakes up amnesiac in the next session and does its best. The failure mode is invisible from inside.

Cognitive load. When an agent is deep in a multi-step task (debugging code, analyzing documents, building something), the meta-work of memory management competes for the same attention budget. Memory work loses because it feels like overhead, not progress.

Cross-session blindness. Most agent frameworks treat each session as isolated. Your Telegram session doesn’t know what your Discord session was doing. When you come back tomorrow, you start from scratch. The agent might have done great work yesterday, but if it didn’t save it, that work is gone.

The common solution is to write more instructions. Add more reminders. Make AGENTS.md longer and more emphatic. But this just feeds the problem. More tokens, more attention dilution, same outcomes.

Enforcement > Instructions

Here’s the insight that actually fixed this: you can’t rely on the agent to remember to do memory management. You need to make memory management happen regardless of what the agent is thinking about.

The solution is middleware, not prompting.

I built a plugin called Memory Guardian that hooks into OpenClaw’s extension system. Instead of telling the agent what to do, it intercepts key moments and handles memory automatically. The agent doesn’t have to remember because the system does it for them.

The plugin uses six hooks:

1. Context injection (before every turn). The plugin loads today’s and yesterday’s daily notes and injects them into context. The agent doesn’t have to decide to read them. They’re already there.

2. Checkpoint gate (before every turn). Tracks how many tool calls have happened since the last write to memory/. At 12 calls, it adds a gentle reminder to context. At 20, a firm one. At 30, it injects a “STOP. Checkpoint NOW.” instruction. The agent can still ignore this, but the escalating pressure usually works.

3. Pre-compaction breadcrumb. When OpenClaw is about to compress context, the plugin fires first and writes a breadcrumb to the daily notes. Current topic, recent decisions, what was happening. This runs automatically, not at the agent’s discretion.

4. Pre-reset breadcrumb. Same thing, but for /reset and /new commands. Anything the agent would lose, it saves first.

5. Memory search tracking. Counts turns since the agent last ran memory_search. After 8 turns, it starts adding reminders. “You haven’t searched memory in a while. If you’re answering from recall, consider checking your notes.”

6. Cross-session state. When any session writes or edits a file, the plugin appends a line to a shared state file. Other sessions see this on their next turn. Now your Telegram session knows your Discord session was editing code 20 minutes ago.

7. Auto-breadcrumbs. Every 10 tool calls, the plugin writes a short breadcrumb to the daily notes. Session, tool count, recent tools used. Even if the agent never checkpoints, there’s a trail.

What This Actually Looks Like

Here’s what gets injected into context on a typical turn:

# Memory Guardian Context

⚠️ CHECKPOINT NEEDED (15 tool calls without writing to memory):

Consider pausing to write a checkpoint to memory/2026-02-16.md.

## Today's Notes (2026-02-16)

[contents of today's daily notes]

## Yesterday's Notes (2026-02-15)

[truncated contents of yesterday's notes]

## Cross-Session State

- 14:32 [telegram:main] Write: drafts/post.md

- 14:28 [discord:dev] Edit: src/index.ts

The agent sees this every turn. It knows what it’s been doing. It knows what other sessions have been doing. It knows when it needs to checkpoint. And if it still doesn’t checkpoint, the plugin handles the critical moments (pre-compaction, pre-reset) automatically.

The Psychology Behind It

This maps pretty cleanly to how we handle intention-action gaps in humans.

You don’t rely on willpower to remember to take medication. You set an alarm, or you put the pills next to your coffee maker. Environmental design over intention.

You don’t trust yourself to save money. You set up automatic transfers. Systems over self-discipline.

Same principle here. The agent’s “intention” to manage memory well is unreliable. So you build systems that make good memory management the default, and bad memory management harder to do.

The checkpoint gate is essentially a forcing function. The agent can still skip checkpoints, but it has to actively ignore escalating warnings to do so. Most of the time, it doesn’t.

What This Fixed

Before Memory Guardian:

Regularly lost context after compaction

Answered questions about past work incorrectly

Same questions got re-asked across sessions

Daily notes were sparse or nonexistent

After:

Breadcrumbs automatically saved before compaction

Daily notes have a reliable trail of what happened

Cross-session awareness of what other sessions are doing

Checkpoint warnings actually work (agent responds to escalation)

The agent still isn’t perfect at memory management. But the floor is much higher. Even on a bad day, there’s a trail to follow.

Try It Yourself

The plugin is MIT licensed and works with OpenClaw v0.4.0+. You can grab it here:

On GitHub

Drop it in .openclaw/extensions/memory-guardian/, restart your gateway, and you’re done. The config at the top of the file lets you tune the reminder frequencies, how much of daily notes to inject, and other details.

If you’re developing agents and tackling the memory issue, I believe this is the appropriate layer to address it. It’s not about adding extra instructions or extending prompts. Instead, it’s about implementing middleware that manages the mundane tasks, allowing your agent to concentrate on its core responsibilities.